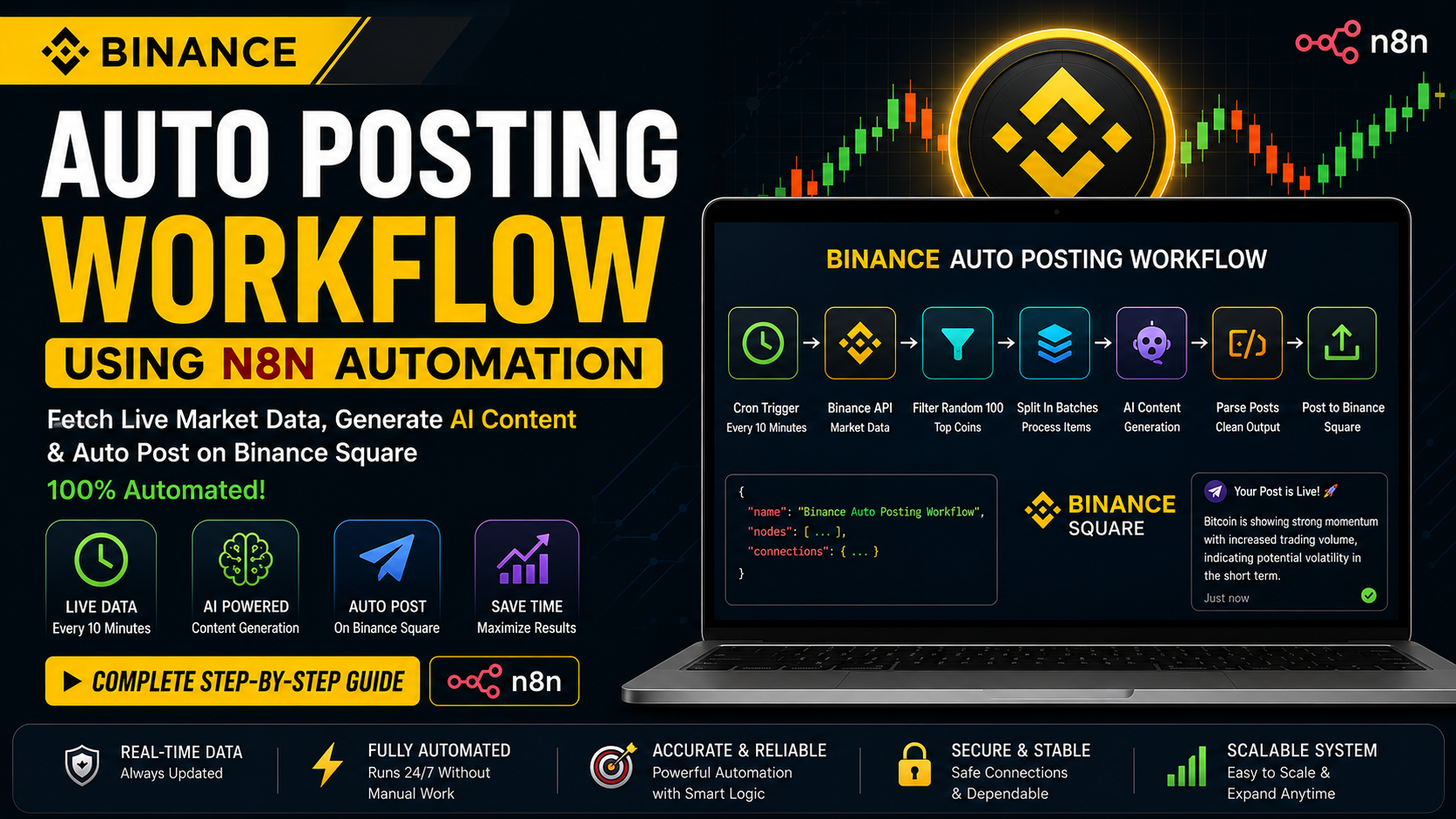

The workflow is an automated content-generation system built with n8n that generates cryptocurrency-related posts and submits them to Binance Square. It functions as a fully automated pipeline, taking live market data from Binance, processing it through multiple transformation stages, generating human-like content using AI, formatting the resulting data, and submitting it to an external API for publication. There are no manual interventions throughout the workflow, and it is set to run on a scheduled cycle, providing continuous content generation throughout the day.

Additionally, the JavaScript code nodes within this same flow play a significant role because they define all logic and provide the means to transform data through processes such as filtering market data, selecting which coins to use, randomizing the output, and formatting AI-generated text for submission. Without the JavaScript code node sections in this workflow, the workflow would consist solely of moving unfiltered market data, without any intelligence or structure.

Download JSON |

Join Binance |

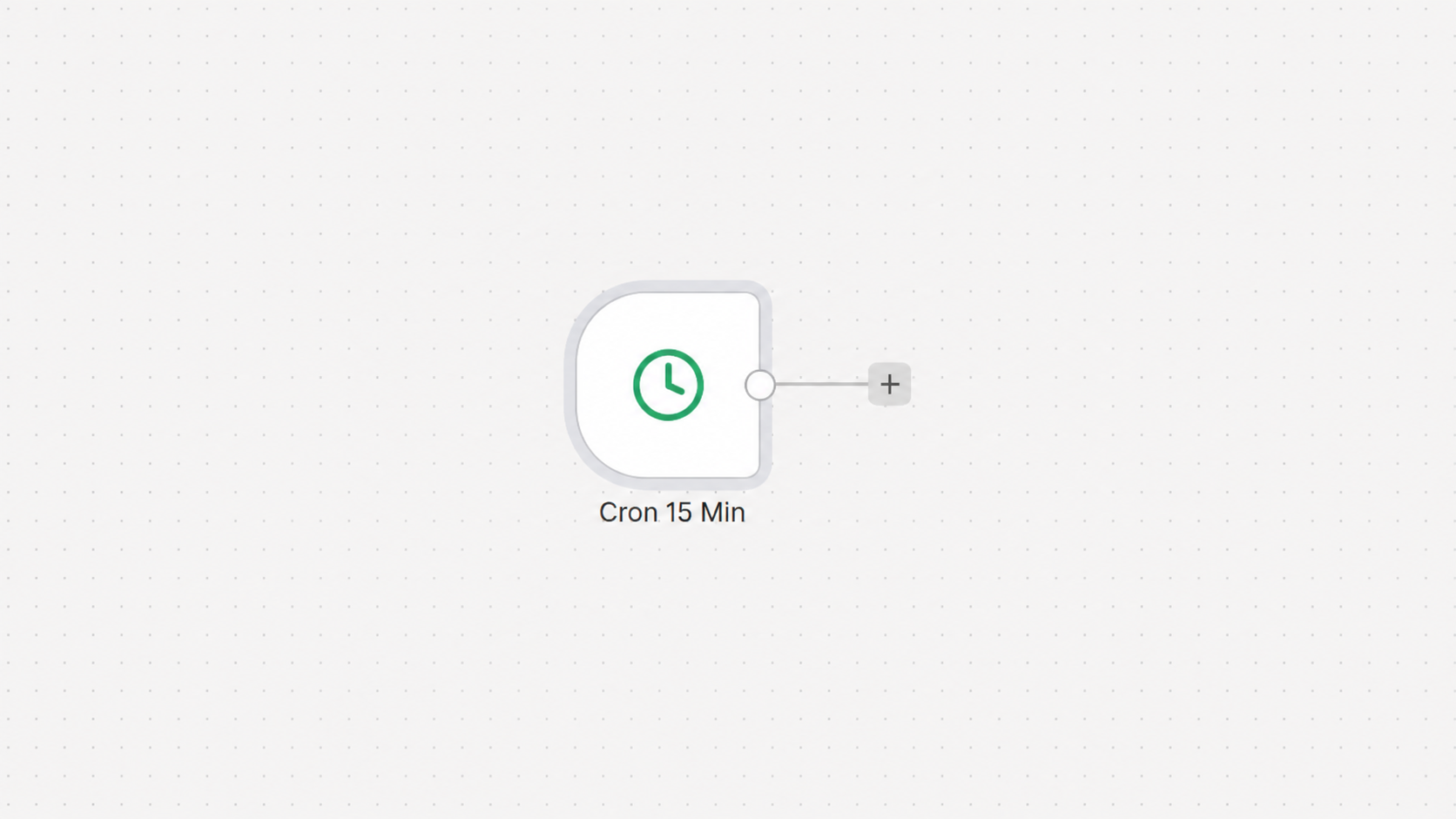

Scheduled Trigger and Workflow Activation

Scheduled Trigger and Workflow Execution

The Cron Trigger node is at the center of all automation in n8n and serves as the primary trigger for automatically initiating a workflow after a predetermined interval, without user interaction. In this case, the trigger is set to run every 10 minutes; therefore, the automation process runs continuously, 24 hours a day, 7 days a week.

One reason it uses this type of trigger is to ensure there is always an effective way to stay engaged and provide new cryptocurrency-related content. Rather than having the user manually run the command when there is a new post or posts to be fetched, the cron trigger automatically executes the entire process to ensure a consistent supply of crypto content.

When configuring the Cron Trigger Node, the scheduling options tell the node how often to execute the workflow, as defined by the everyX mode, and by what unit of time. The value is set to 10, and the time unit is set to minutes, so the workflow will run every 10 minutes. Each execution cycle will trigger the timer and reset it until it is triggered by the second ten-minute period, at which point the workflow will be executed again.

The code logic behind this scheduling system can be understood from the configuration below:

{

"triggerTimes": {

"item": [

{

"mode": "everyX",

"value": 10,

"unit": "minutes"

}

]

}

}

When using “mode”: “everyX”, n8n will continuously re-run your workflow every set amount of time, with “value”:10 defining the number of times, and “unit”: “minutes” specifying that it is counted in minutes; therefore, the workflow will run automatically every 10 minutes.

Once the trigger fires, the automation will restart. The workflow will retrieve live market data from the Binance APIs to filter the trading pairs, identify high-volume cryptocurrencies, and randomly order the dataset. The workflow then processes the coins one at a time, generates blockchain-based crypto posts using AI, removes errors from the generated text, and posts content directly to Binance Square. This process will run automatically to create new crypto content 24/7, without any human input.

Reliability and consistency are two additional major advantages of the Cron Trigger node. Cryptocurrency markets operate 24/7, so manual content publishing would not be practical or efficient. The scheduled trigger ensures that articles will continue to be produced while the author is offline. This enables the creation of a fully automated publishing engine capable of producing a steady stream of content on Binance Square.

Workflow scalability also comes from the Cron Trigger node, as new processing nodes can be added to the workflow after the trigger with no change to the schedule logic. The trigger allows the system to remain active, regardless of whether 10 coins or thousands of market pairs are being processed, and will continue to operate as scheduled until reprogrammed.

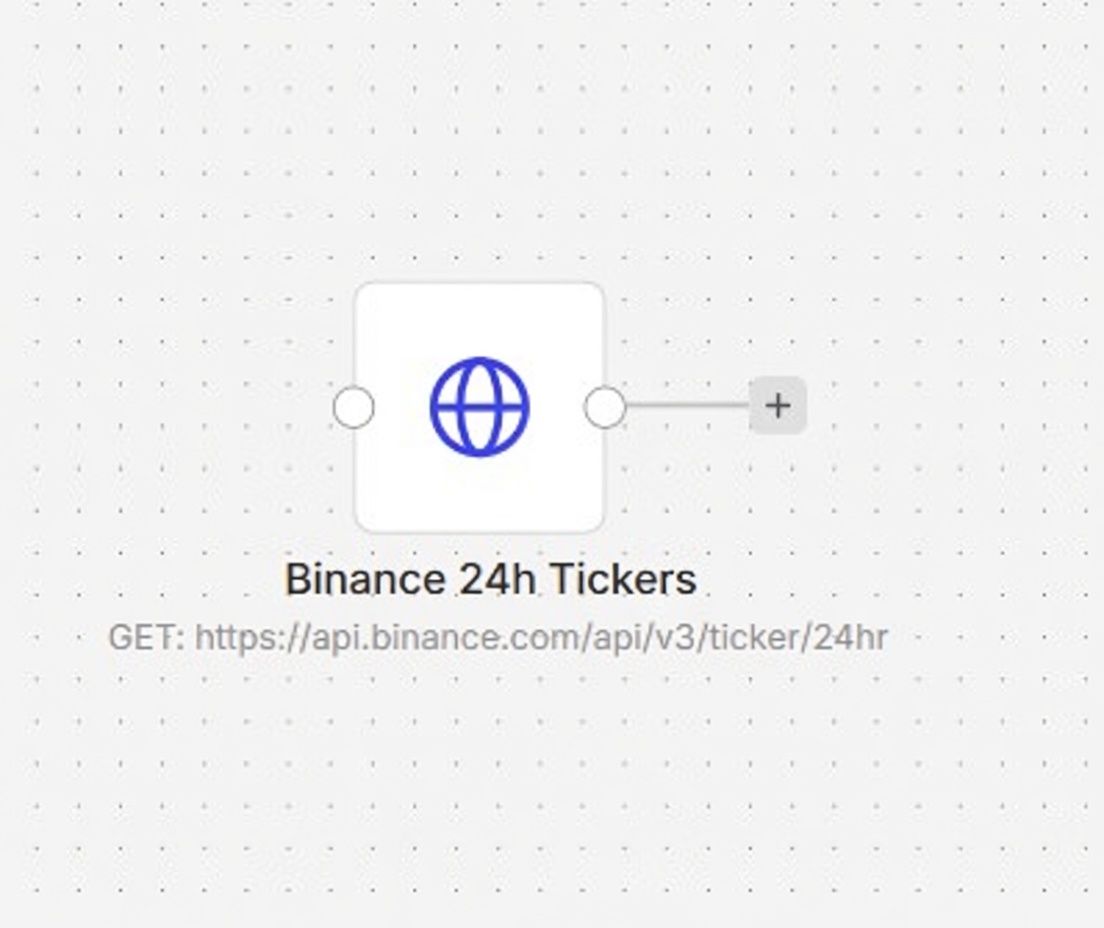

Fetching Market Data from Binance

As soon as the Cron Trigger turns on Workflow, the first major technical operation will be done using an HTTP Request Node, which will create a direct connection to the Binance Public API Infrastructure. This particular Node will collect real–time market data about cryptocurrencies from the Binance Exchange for use in filtering, analysis, and the generation of AI-created content, and will finally publish the documents automatically using information that is not outdated or does not represent the current state of the market. The HTTP Request Node will send a GET request to the following endpoint of the Binance API:

https://api.binance.com/api/v3/ticker/24hr

This endpoint is one of the most important public market data APIs Binance provides, as it returns ticker statistics for all trading pairs on Binance over a 24-hour period. Therefore, upon receipt of the response from this API, there will be a very large dataset in JSON format, containing multiple entries for thousands of cryptocurrency pairs, along with key market indicators for each pair.

By executing your request, Binance supplies real-time market information, such as trading symbols, opening price, high and low price in the previous 24 hours, percentage price movement, total volume of all trades, total volume of quote asset, number of trades, weighted average price, and many other data fields/statistics. This means this endpoint is very useful for building automated crypto systems because you’re getting a current view of the total market activity in one place.

A simplified example of the response structure looks like this:

{

"symbol": "BTCUSDT",

"priceChange": "1520.50",

"priceChangePercent": "2.45",

"weightedAvgPrice": "64350.12",

"lastPrice": "65200.00",

"volume": "18543.12",

"quoteVolume": "1190000000"

}

Each object inside the response array represents one trading pair available on Binance. For example, BTCUSDT represents the Bitcoin to Tether trading market, while ETHUSDT represents Ethereum paired with Tether. The field "priceChangePercent" indicates how much the asset moved in percentage terms during the last twenty-four hours, while "quoteVolume" represents the total trading volume measured in the quote currency. These metrics are extremely important because they help identify highly active and trending cryptocurrencies.

However, at this point, we have obtained entirely unrefined raw data; no manipulation or transformation has been performed on it. The Binance API returns raw data associated with many thousands of trading pairs comprising various stable coins, inactive assets, low-liquidity tokens, several fiat currency pairs, and many other redundant assets, which will ultimately be irrelevant for crypto content generation. For example, these would be the trading pairs (USDCUSDT, BUSDUSDT, EURUSDT, TRYUSDT, etc.) of many stable/fiat assets that offer little to no price volatility or opportunities for engagement.

Since this initial data set is both extremely large and noisy, providing it directly to an AI model to generate content would result in low-quality, non-unique output. Therefore, the workflow requires additional processing and filtering before it can utilize the raw response from the Binance API. Hence, the next node in the pipeline will be responsible for analyzing the raw Binance response; this process will consist of removing any extraneous assets, filtering the list of currencies being traded based on levels of activity associated with each currency traded, and selecting only those highly relevant cryptocurrencies that can be utilized to automate the generation of content.

One major technical benefit of the Binance 24-Hour Ticker Endpoint is its performance efficiency. Instead of needing to query each coin individually via a separate API call, this method allows the entire market snapshot to be retrieved in a single batch (and, therefore, it reduces API overhead, increases execution speed, and minimizes risk related to API request rate limits). It can scale consistently while enabling the workflow to handle 100s of new cryptocurrency pairs with each automation cycle.

Using the HTTP Request Node for the knowledge acquisition aspect of the automation process also enables flexibility by reusing the same market dataset for multiple uses (e.g., trend analysis, AI-generated sentiment, market monitoring, volatility tracking, and automated publishing systems). This type of centralized collection of market data will serve as the basis for all downstream intelligent processing operations in large-scale automation architectures.

In general, the Binance API request node serves as the primary data ingestion layer across all elements of the overall automation workflow. It provides a continuous flow of new and real-time cryptocurrency market data through the n8n pipeline, creating an infrastructure for all filtering, analysis, AI generation, and publishing actions.

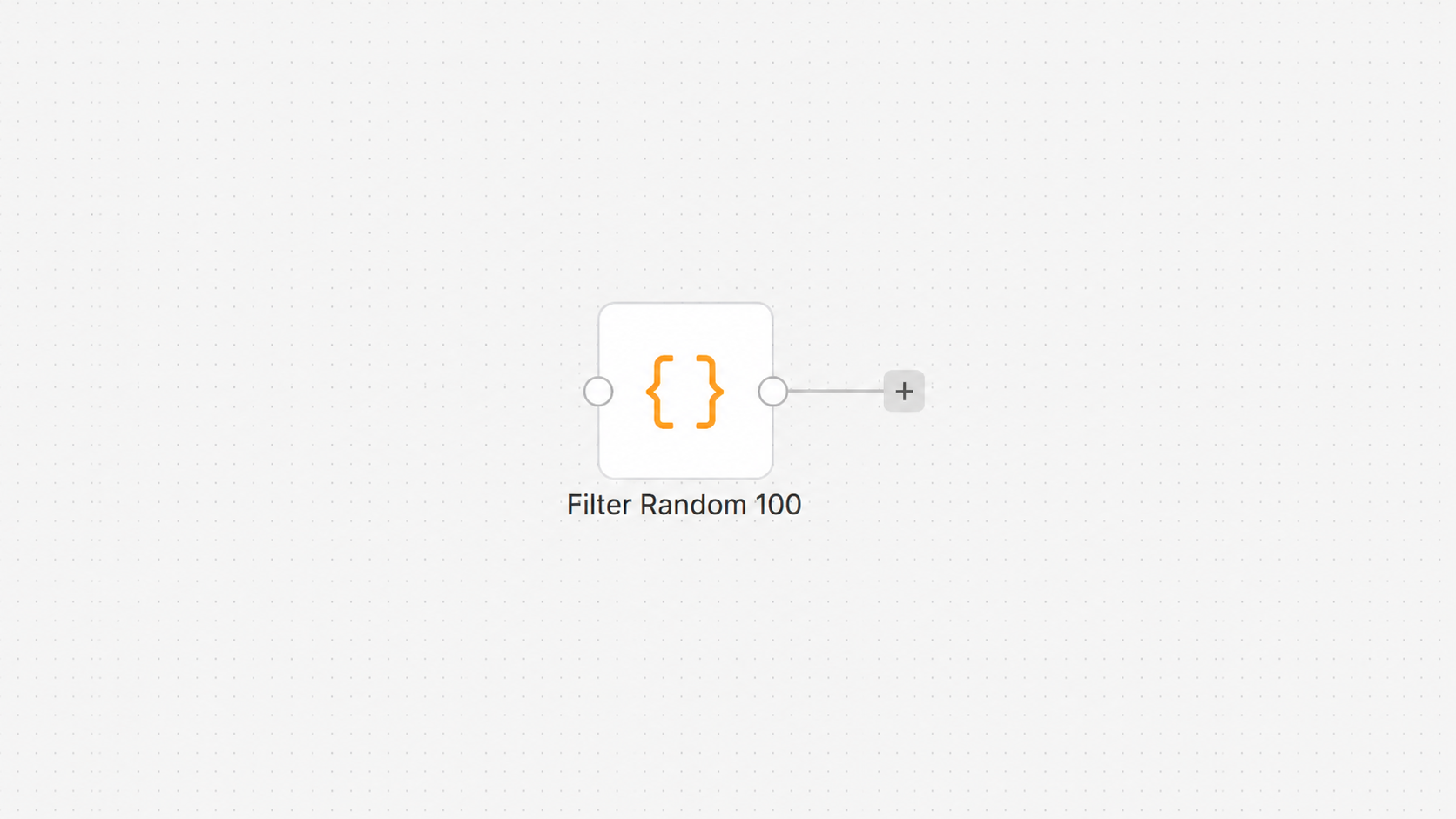

Code Node 1: Filter Random 100 (Full Explanation with Code)

The logic node that processes an incoming stream of raw Binance cryptocurrency market data to produce structured, selected cryptocurrencies for use in an application is the most essential node in the workflow for this reason.

Step 1: Convert Input Data

const coins = $input.all().map(i => i.json);

The flow from the Binance server sends all incoming market data to this line, which converts it into a clean, structured JSON array.

There is a clean pair of data points in the array for each trading pair.

If this were not done, we would not be able to access the data in a form usable with JavaScript.

Step 2: Define Stable Coins List

const stableCoins = ["USDT","USDC","BUSD","TUSD","FDUSD","DAI","EUR","GBP","TRY","BRL","AUD","USDTE","USDE","RLUSD","USDP"];

This array lists all stablecoins and fiat currencies to be excluded.

Step 3: Filter Logic

let filtered = coins.filter(c => {

if (!c.symbol) return false;

const s = c.symbol.toUpperCase();

if (!s.endsWith('USDT')) return false;

const base = s.replace('USDT','');

if (stableCoins.includes(base)) return false;

return true;

});

This block is the core filtering engine.

Step 4: Sorting by Volume

filtered.sort((a,b)=>parseFloat(b.quoteVolume||0)-parseFloat(a.quoteVolume||0));

This line sorts all filtered coins based on trading volume.

Higher volume coins are more active and more relevant for content creation.

So this ensures important coins appear first.

Step 5: Select Top 300

const top300 = filtered.slice(0,300);

Step 6: Shuffle Randomly

for(let i=top300.length-1;i>0;i--){

const j=Math.floor(Math.random()*(i+1));

[top300[i],top300[j]]=[top300[j],top300[i]];

}

Step 7: Remove Duplicates

const seen=new Set();

const result=[];

Step 8 Loop and Build Output

for(const c of top300){

const symbol=c.symbol.replace('USDT','');

if(seen.has(symbol)) continue;

seen.add(symbol);

result.push({

json:{

symbol,

pair:c.symbol,

volume:c.quoteVolume,

change:c.priceChangePercent

}

});

if(result.length>=100) break;

}

The loop takes an individual coin, then:

– Remove the USDT from the symbol to derive a base currency.

– Identify duplicates using a Set.

– Push structured data containing:

– The Symbol

– The Trading pair

– The Volume

– The Price change percentage

It then terminates after selecting 100 coins.

Step 9 Return Final Data

return result;

This sends cleaned and structured data to the next node.

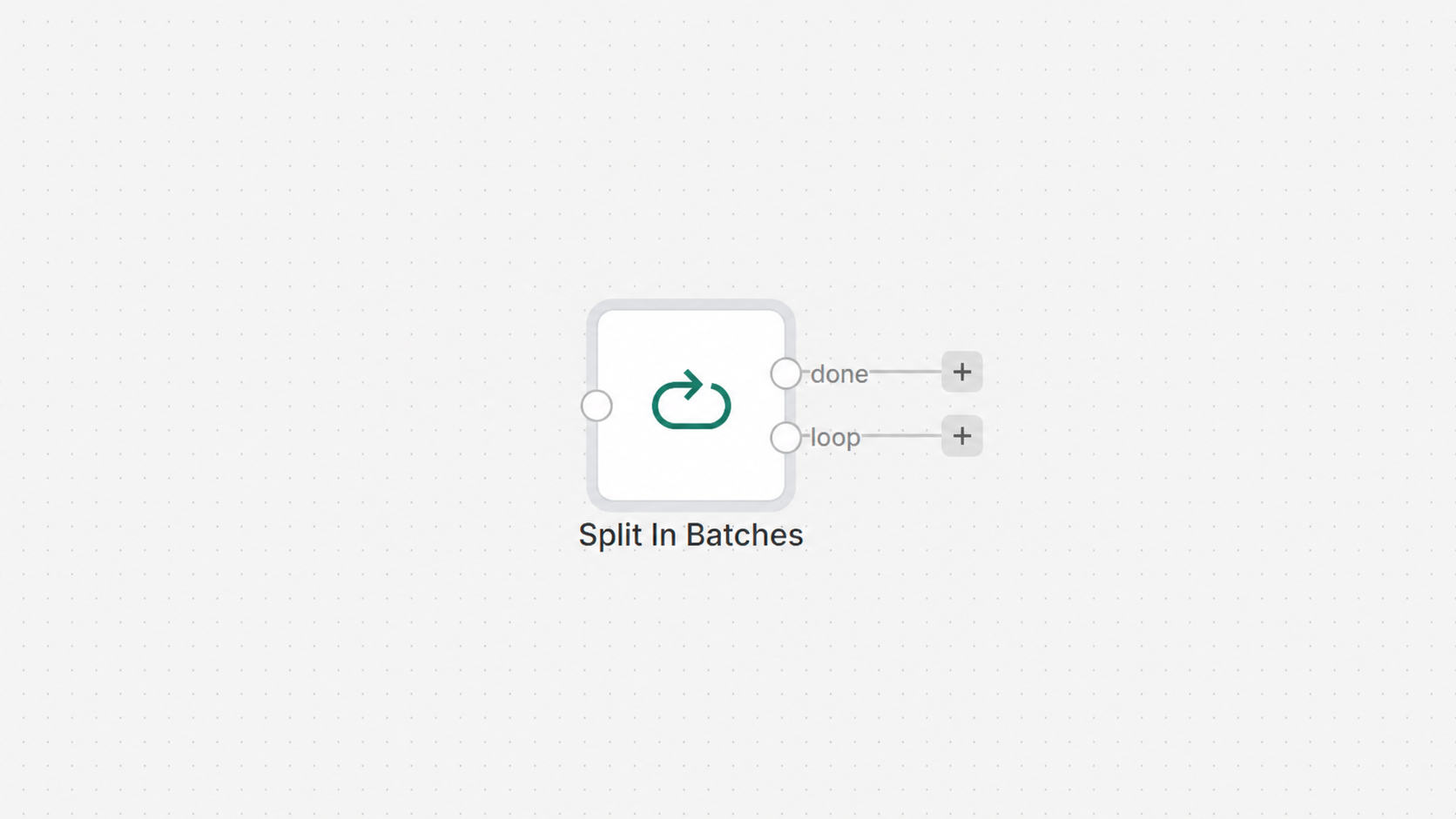

Batch Processing Node

After filtering and selecting the top-performing cryptocurrencies, the filtering and selection process into the first working age is done within the n8n workflow by using the Split In Batches (SIB) node to break the filtered list of 100 selected currencies down into smaller sets (or batches) in order for each coin in the set of selected coins to be processed separately and in order.

This creates an additional level of structure, which is important for increased efficiency in this type of automation workflow that includes artificial intelligence, since large datasets cannot be processed in a single execution without causing performance issues (such as memory overload) and inconsistent results. Therefore, the filtering and selection process will create an executable for a single cryptocurrency symbol (coin) at a time, using the appropriate AI agent for each symbol.

The batching node operates by traversing the filtered coin array created in a previous filtering stage. Instead of promoting the entire array as a single payload, the batching node pulls out each item from the filtered array and feeds it to the subsequent node one at a time. Therefore, if the system consists of 100 coins, then 100 unique cycles of AI processing will be performed on each coin.

This handling method is highly advantageous for the Batching Node in terms of performance and stability. Because of this, the likelihood of the model working successfully decreases, thereby eliminating the potential for overload when it is required to process a considerable volume of data at the same time. Additionally, by providing more focused inputs to the AI model in isolation, rather than processing all coins together, this will improve the overall quality of the data output and reduce the risk of exceeding a single data source/token.

Secondly, it will create a high degree of consistency in generating new content across all the different coins being worked on. Each coin will remain separate (individually), so the AI will produce unique and dedicated posts for each cryptocurrency without getting confused by other coins by combining their contexts. Thus, content output will be cleaner, more relevant, and much more engaging across platforms (especially with Binance Square).

Thirdly, it provides better control for how execution flows. If an individual coin creates an error or returns an unexpected response, it will not stop the entire batch from continuing to process, since each will be executed independently. This results in a more resilient and fault-tolerant system.

From a design standpoint, batch processing allows the automation pipeline to be parallel-scalable depending on the configuration. n8n can either execute your items one after another or in a controlled manner, thus allowing you to balance between speed and consistency, given the resources available.

This node essentially serves as a hub for controlling the flow of traffic through the automation pipeline. By separating the execution of 100 selected cryptocurrencies, rather than executing them all together, AI performance is greatly enhanced, system overloads are minimized, and each crypto post produced is high quality and consistent throughout the automated process.

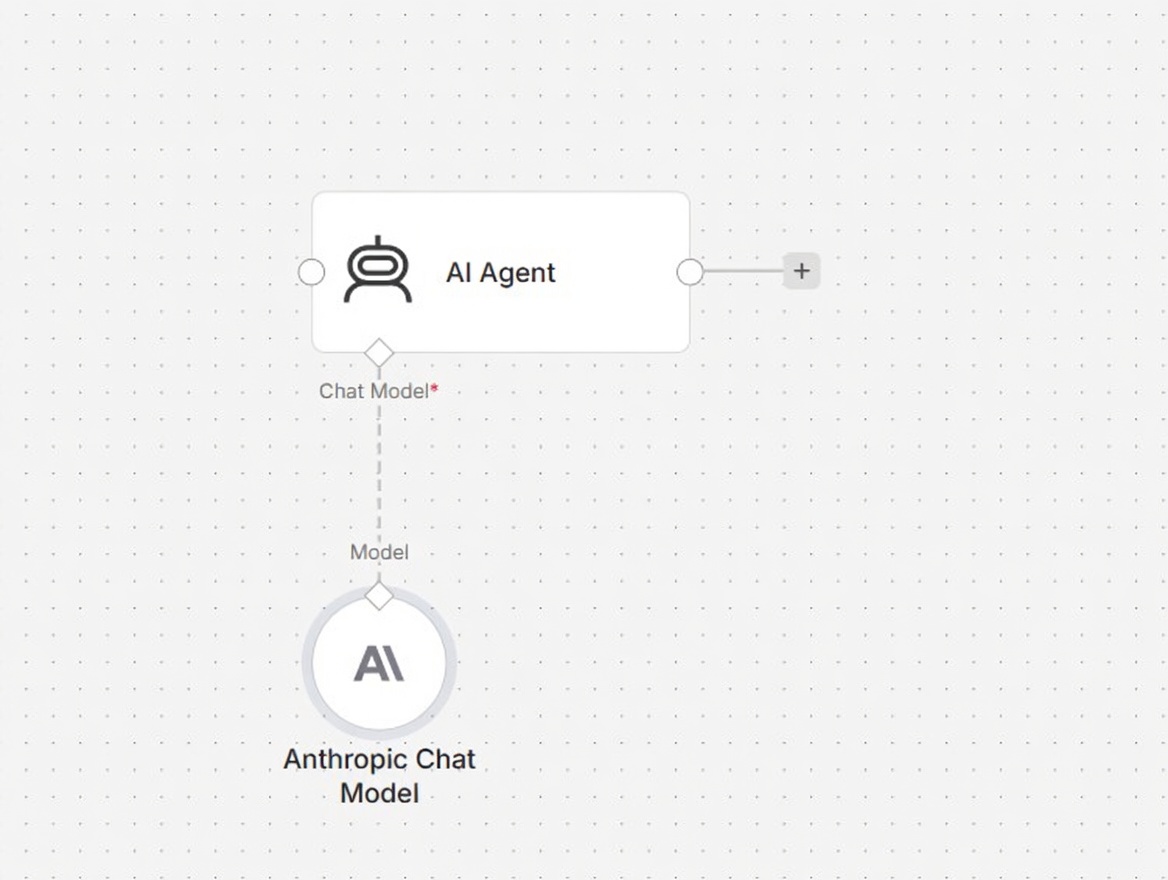

AI Agent Node (Content Generation Logic)

AI Content Generation and Language Model Processing

After reaching the AI Agent Node in our workflow, each crypto symbol batched in the previous step is passed to the LLM along with a prompt. This stage of the workflow is the “Intelligent Layer” of the automated workflow, producing human-readable, engaging, and publisher-ready content from raw market identifiers.

At this time in the workflow, the input is no longer a dataset but an individual coin, e.g., BTC, ETH, or SOL. The input is given to the AI with detailed instructions on how to generate the output (what will be produced). The instructions guarantee that the output will be consistent with the structure, tone, and format required for a professional crypto publishing site (such as Binance Square) by providing strictly defined requirements for each post.

The AI will first convert the cryptocurrency symbol to its full name (for example, for BTC, it will interpret this as “Bitcoin”; for ETH, it will be “Ethereum”; and for SOL, it will be “Solana”). The AI will then generate a short, impactful post focused on that cryptocurrency. This limited word count (500 characters or fewer) will result in a clearer, more digestible message for a general audience that may be unfamiliar with crypto abbreviations.

From there, the AI will be limited to 500 characters or fewer to create a concise, effective, and optimally readable post for social media. Creating shorter posts will lead to higher engagement rates and allow them to be formatted correctly for each platform.

$ABC and $XYZ are common examples of how to format your crypto-related content with the dollar sign and the associated coin ticker in a way that is recognized within cryptocurrency communities. This formatting style allows the writer or creator of crypto-related content to identify the specific asset being referenced and align with market-related communication styles.

Also, as a rule, the AI is directed to refrain from using any kind of emojis, decorative characters/symbols or any other such character/symbol to maintain the integrity of the output; ensuring content written for the finance/analytic type environments or any other type of analytic based content remains clean and professional; by eliminating unnecessary symbols will also create better compatibility with a wide range of API’s and publishing or distribution systems that do not support current formatting styles.

The generated content must be professional and informative, with a focus on the marketplace. Rather than using casual language or promotional verbiage, AI will deliver structured financial insights into market conditions, reflecting market awareness and trading relevance. Consequently, the content produced is also perceived as more credible and aligned with real-time discussions about cryptocurrency.

Language Model Node and AI Configuration

An AI Agent node powered by a Claude Language Model that generates exceptional quality natural language responses with a solid understanding of the context. This model was integrated with n8n as the LangChain-enabled node, enabling structured prompt engineering and controlled behavior when generating AI content.

The model is designed specifically for content generation tasks and prioritizes producing clear, coherent, and contextually relevant responses. The model is also optimized to take short-form inputs (for example, a cryptocurrency symbol) and transform them into contextually relevant, expanded outputs.

The most critical part of the configuration is managing tokens or token usage. Token allotments are set to allow responses that meet the expected criteria (quality) while still leaving room for complete, meaningful content production. Because of this token limit, the model’s behavior remains consistent from execution to execution and does not produce long outputs or outputs that lack any information they should contain.

By controlling how many tokens are used per AI call and how long each call takes, the workflow maintains a balance between quality and efficiency. Each call leveraged the AI’s strengths (including speed and efficiency) to generate a single, high-quality post without unnecessary wordiness or excessive CPU usage.

Therefore, this stage of AI processing serves as the creativity engine for the entire system. It receives structured data from the batching node, utilizes and enforces strict formatting standards, applies a powerful language model (AI) for content generation, and generates relatively uniform and professional content for use in generating and publishing information related to crypto in subsequent stages of processing.

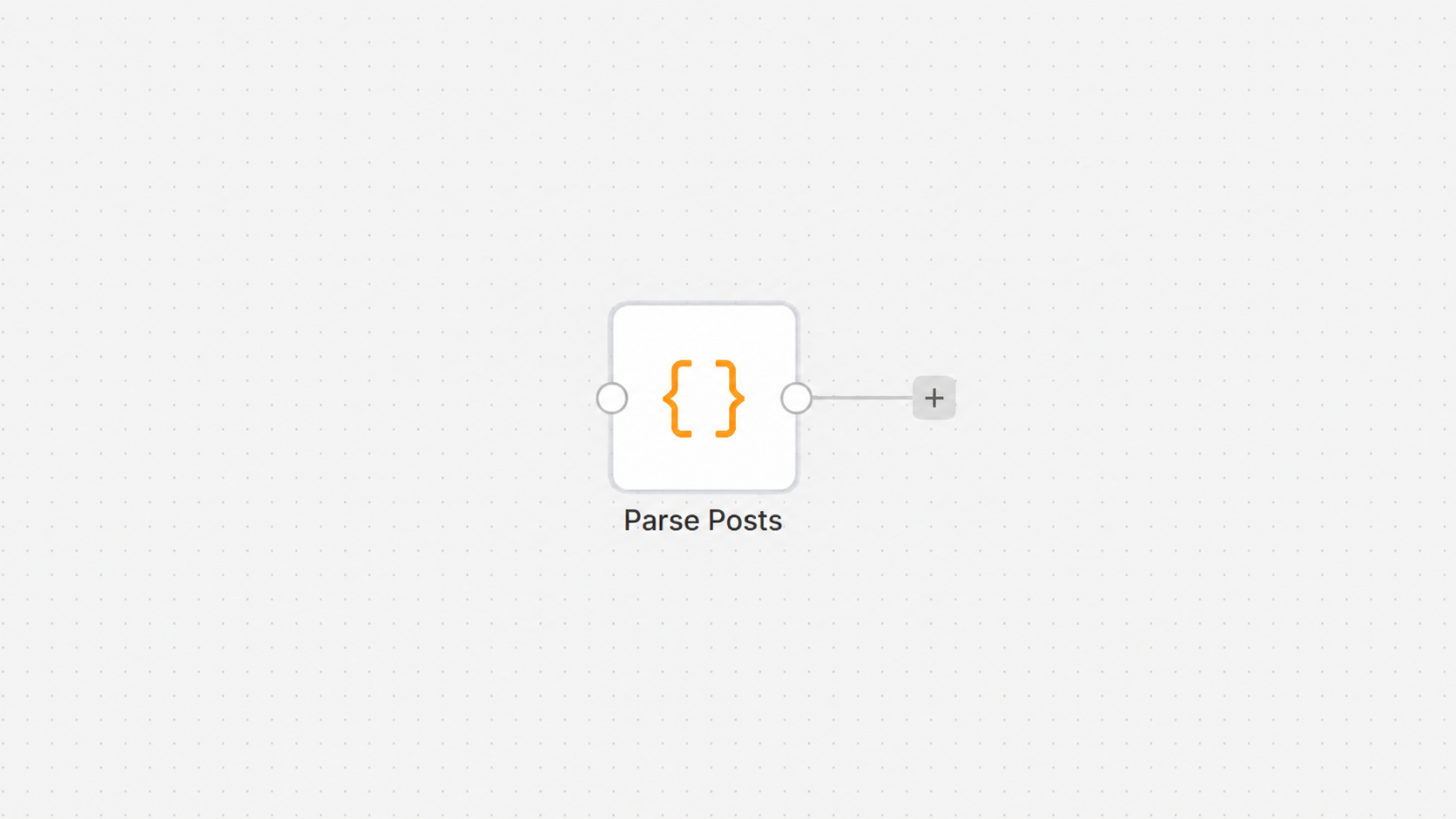

Code Node 2: Parse Posts (AI Output Cleaner)

AI Output Cleaning and Text Formatting Before Publishing

After the AI Agent produces the cryptocurrency content, the next significant step is for a Code node to clean and standardize the AI-generated text before it is submitted to Binance Square. There are differences between outputs that are high quality when produced by an AI agent; however, they may contain formatting inconsistencies. Examples include line breaks, extra spaces between words, and structural differences incompatible with third-party publishing APIs.

The Code Node in the process is responsible for converting each AI-generated post into a single, line-formatted output that meets the stringent content criteria set by Binance Square. The Publishing API expects plain text formatted properly; any unnecessary formatting will be removed before submission.

Step 1: Processing Each AI Output Individually

The first step of our code uses a for Loop to iterate over each output item from the AI Node, mapped individually via a mapping function, allowing us to process each item on its own rather than processing them all together in a single file.

return $input.all().map(item => {

This statement retrieves all items sent to our previous record and runs a transform function on each item independently. In other words, every single AI–generated post will be processed as a separate entity, regardless of whether it is processed together with other posts (this way we ensure that every single piece of content generated/posted there was processed properly and no order will happen either.

This approach is particularly relevant in automated workflows because it preserves the integrity of the data being processed by ensuring that each cryptocurrency posting remains unique.

Step 2: Extracting AI-generated content

Once all inputs are processed independently, the next step would be to extract the actual text produced by the AI model for each piece of content in the automated workflow.

let content = item.json.output || '';

In this line, the system attempts to access the output field from the AI response. This field contains the generated cryptocurrency post created by the language model.

If, for any reason, the output field is missing or undefined, the code assigns an empty string as a fallback value. This prevents the workflow from breaking due to unexpected missing data and ensures smooth execution even in edge cases where the AI response is incomplete.

Step 3: Removing Line Breaks and Formatting Issues

The next phase after extracting content will involve cleaning up the text by eliminating extraneous formatting characters (e.g., line breaks and carriage returns).

content = content.replace(/\n/g, ' ').replace(/\r/g, ' ');

This transformation ensures that all newline characters (\n) and carriage return characters (\r) are replaced with simple spaces.

This is because when submitting content to Binance Square, all submissions must be a single, continuous line (i.e., no line breaks). Otherwise, the API will either reject your request or display your content incorrectly.

By converting multi-line text into a single structured line, all submissions will conform to the publishing workflow and maintain a consistent appearance across submissions.

Step 4: Returning Clean and Standardized Data

Once the data cleaning and formatting are complete, the final step is to produce a structured JSON output containing the processed data to be passed to the next node in the workflow.

return {

json: {

content

}

};

The only thing sent downstream is the cleaned-up content field, with no leftover metadata or formatting from previous workflows. At this point, the data is now optimally ready for publication. Accordingly, because it is clean (a single, cleaned-up line) and structure-consistent, it is ready for direct submission to the Binance Square API as the final workflow step.

Overall Role of This Node

This cleaning node serves as the final link between your AI-generated content and the outside world of publishing. The AI produces creative, high-quality content; therefore, this cleaning node ensures technical compliance with data formats to ensure that the data created by the AI can be published. If there weren’t this step in the process, there could be various inconsistencies or invalid outputs, which would create a significant risk of failure during API submission or would display incorrectly for your intended audience on the target platform.

This cleaning node enforces the requirement for properly structured processing of all content produced by removing the formatting noise associated with content produced inside the AI and standardizing the output format to ensure that each of these new Crypto posts generated by your API Solution will be fully compliant with the requirements set forth by Binance Square for posts submitted from that solution.

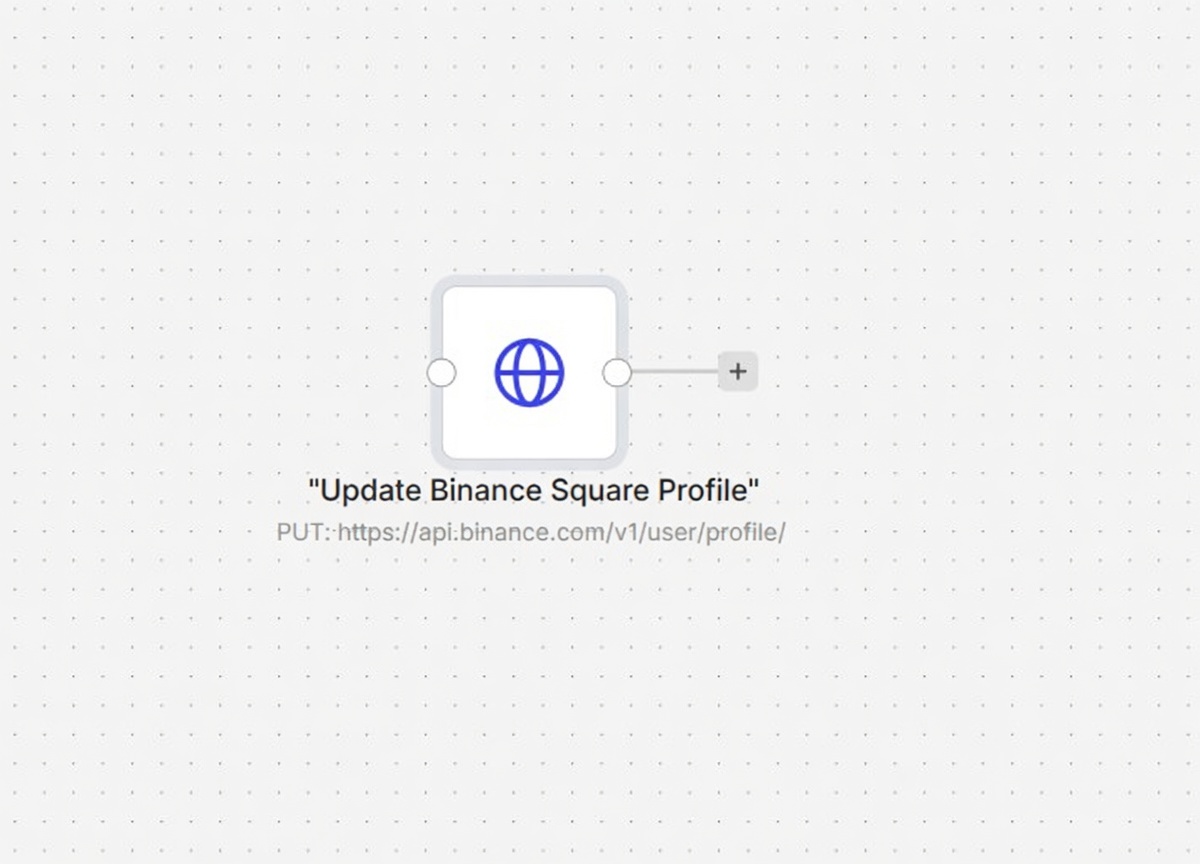

Binance Square Posting Node

Publishing Content to Binance Square via Binance API

In an automation workflow, all previous stages culminate in this stage’s output, the published AI-generated content, by sending the clean and fully processed content to the Binance Square publishing API.

At this point, the content is presented as a clean single line, which meets all the requirements for the publishing platform. The only task remaining is to securely transmit the content to Binance via an authenticated API request.

API Request and Endpoint Function

Using the Binance Square Content Publishing API, the GET (HTTP) request node communicates with an endpoint that accepts user-generated posts via an authenticated request for publication on the platform. The final content payload is included in the body of a POST request.

The request format is designed and controlled to enable accurate, consistent delivery of data to the Binance platform, enabling it to interpret and publish it accurately.

Authentication and Security Headers

Authentication Headers

The node uses specific authentication headers to communicate securely with Binance servers and verify that the requester is a valid user authorized to send requests.

The key header used when accessing the Binance Open API system is the API Key Header. The API key is an access credential used by the server to determine whether or not to allow a request. The server will reject requests without an API key header for security reasons.

In addition to using authentication headers, all requests to the Binance server include a content-type header. These headers define the data sent in the request body. They ensure that Binance correctly interprets and receives the request body as JSON-structured content.

Content Payload Structure

The final AI-generated post is sent inside a specific field called bodyTextOnly. This field is required by the Binance Square API to define the actual text content of the post.

Given how the request body is created, only the cleaned-up, formatted text will be sent, not any extraneous metadata or workflow data.

This design keeps the payload light and limited to only the contents to be published. Therefore, both the reliability and performance of the API request will be enhanced.

Execution and Publishing Process

After the successful execution of an HTTP Request Node, the Binance API will receive the content to be published via Binance Square. At this point, the system will validate the request, authenticate the credentials, and publish the content to the platform.

If the request is valid and all required fields are properly formatted, the post will become visible to all users within seconds of its creation on the Binance Square User Portal. This completes the entire automation process from data extraction to content publishing.

Role of This Node in the Workflow

This last node serves as the output gateway for the entire solution. While all previous nodes focus on collecting, processing, and generating data with AI, this node’s role is to send the final result to an external platform.

The following items are ensured by this node:

Contents are securely transmitted.

API authentication is valid.

Data formatting complies with Binance requirements.

Post is successfully published without requiring manual intervention.

Without this step, the entire workflow would be considered an internal automation with no external output. This final node turns the entire pipeline into a fully functional publishing engine capable of continuously posting AI-generated crypto content directly to Binance Square.

Final System Outcome and Overall Impact

The final outcome of this workflow is a fully autonomous crypto content-generation and publishing engine that operates continuously without human involvement. It transforms raw, high-volume financial market data into structured, readable, and engaging social media posts that are automatically published on Binance Square. This creates a complete end-to-end automation pipeline in which data collection, processing, intelligence, content creation, formatting, and publishing all occur within a single coordinated system.

At the end of the workflow execution, each processed cryptocurrency symbol yields a unique AI-generated post that is clean, formatted, and ready for public consumption. These posts are not generic outputs but are dynamically created based on real-time market conditions, so the content always reflects current market activity, such as price movements, trading volume, and volatility trends. This enables the system to produce highly relevant and timely crypto insights at scale.

One of the most important outcomes of this system is complete automation. Once the Cron trigger activates the workflow, the entire pipeline runs automatically in cycles, fetching new market data, selecting relevant coins, generating AI content, cleaning it, and publishing it to Binance Square. There is no need for manual monitoring, content writing, or posting. This reduces operational effort to almost zero while maintaining continuous content output.

Another key outcome is content consistency. Because the workflow enforces strict rules for AI prompting and post-processing, every generated post follows the same structure, tone, and formatting. Each post starts with a cryptocurrency symbol, includes a professional market-focused message, avoids emojis or unnecessary symbols, and stays within a controlled character limit. This ensures that all content published through the system looks uniform and professionally curated.

From a technical perspective, the system achieves high efficiency and scalability. By using batch processing and individual execution for each coin, the workflow avoids performance bottlenecks and ensures smooth AI processing even when handling large datasets. The separation of responsibilities between data filtering, AI generation, and text cleaning allows each component to operate independently while contributing to the final output.

The system also ensures reliability and fault tolerance. If one coin fails during processing, it does not affect the rest of the workflow. Each execution cycle is isolated, meaning the system can continue running without interruption even in the presence of partial errors or unexpected API responses. This makes the architecture robust for long-term automated operation.

Ultimately, the system is a scalable content automation engine that turns live cryptocurrency market data into continuous AI-generated social media posts, automatically published in real time. It functions as a self-sustaining digital publishing system that operates 24/7, delivering consistent, structured, and market-relevant content without requiring any human intervention.